PaperBanana: Automated Scientific Paper Analysis & Figure Generation for Researchers

- Published on

- pythonicnerds--3 min read

Folks at google released a new paper on paperbanana which is a new AI framework designed to automatically generate publication-ready academic figures — like methodology diagrams and statistical plots — directly from research text.

How it works?

It uses teams of AI agent that mimic how humans design and research the visual, and it helps researchers hours of manual drawing work.

Basically anyone can give a method description + caption and it produces a professional diagrams or plots that researchers can use in their paper.

This are the AI agents and their role on how they work.

Agent | Role |

|---|---|

Retriever | Find similar reference figures from a database of real diagram |

Planner | Reads your text and drafts a details "figure plan" |

Stylist | Applies academic-style conventions |

Visualizer | Generate the actual visual |

Critic | Reviews and refines the output over multiple iterations |

How to use this?

llmresearcher has a open source implementation of paperbanana which you can check out at https://github.com/llmsresearch/paperbanana their readme is good which you can use to get started with your own use-case.

If you can try this out at the google colab also here the link to it: https://colab.research.google.com/drive/18Z840n3L566j4x5Ij6Oqtr2UXAZO0gli?usp=sharing

The only requirement is to have the OPEN AI API Key or the Google Gemini API Key I tried the free version but you'll face lot of Quota exception on a free tier I'll recommend add a billing or try out Open AI 5$ free credit.

Some of the screenshots how the generated diagrams:

Input Text:

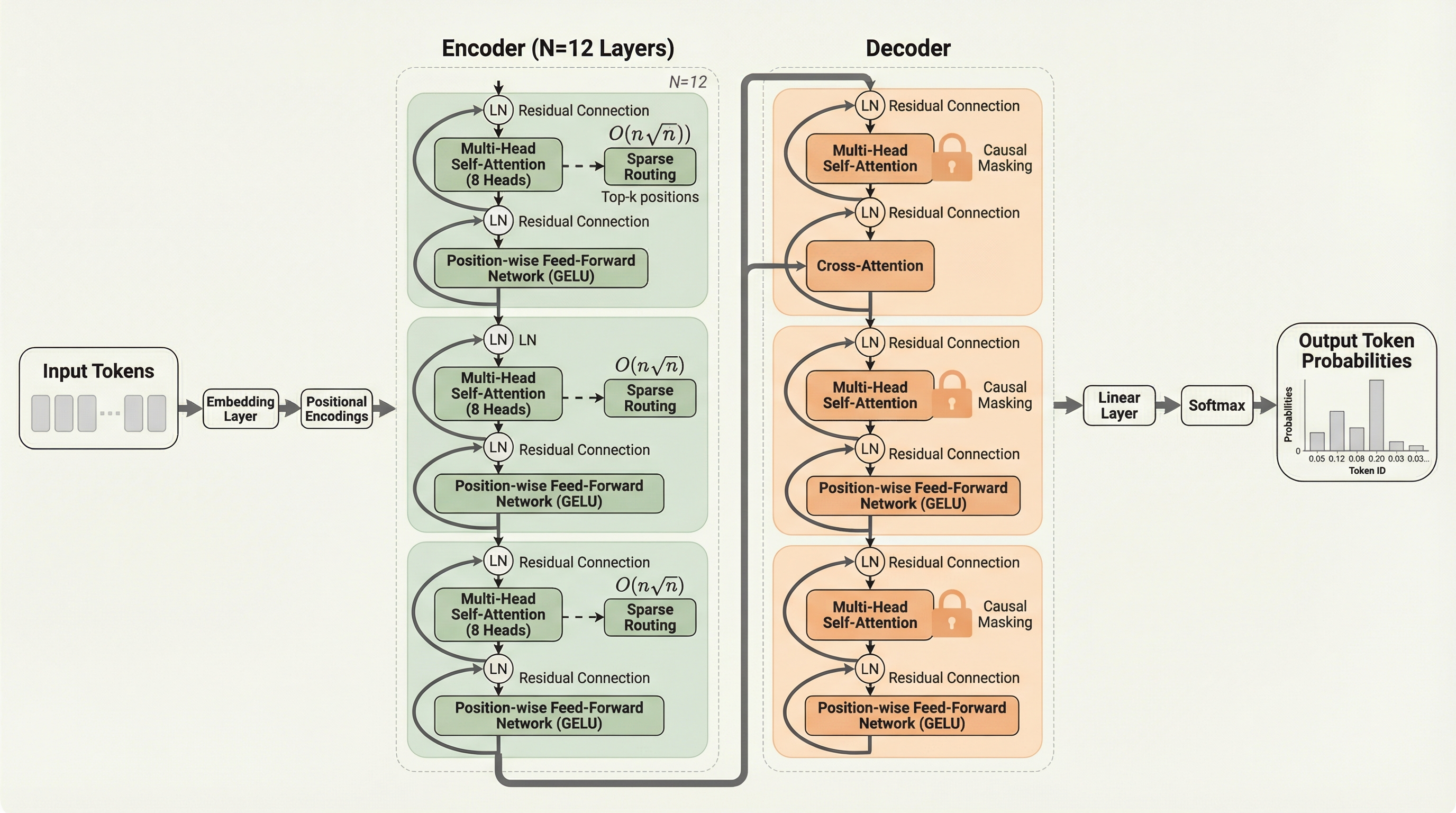

Our model builds on the Transformer architecture with several key modifications.

The input tokens are first embedded through a learned embedding layer and combined with sinusoidal positional encodings. The combined representations are passed through a stack of N=12 encoder layers, each consisting of multi-head self-attention (8 heads) followed by a position-wise feed-forward network with GELU activation. Layer normalization is applied before each sub-layer (Pre-LN), and residual connections wrap each sub-layer.

The decoder follows a similar structure but includes an additional cross-attention layer between the self-attention and feed-forward sub-layers. The cross-attention attends to the encoder's output representations. Causal masking in the decoder's self-attention prevents attending to future positions.

We introduce a novel sparse attention pattern in the encoder that reduces the quadratic complexity to O(n sqrt(n)) by attending to a subset of positions selected through a learned routing mechanism. The router predicts attention scores for all positions and selects the top-k positions for each query.

The final decoder output is projected through a linear layer followed by softmax to produce output token probabilities.